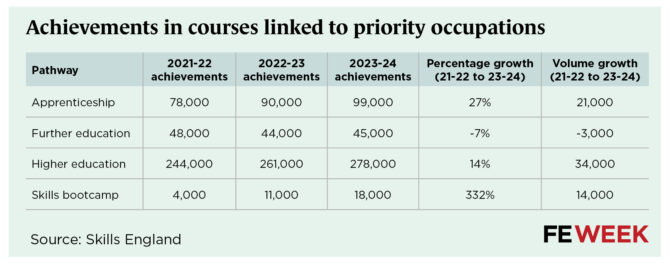

Mainstream further education courses linked to the government’s priority industrial strategy sectors have declined while higher education, apprenticeships and skills bootcamps all grew.

Skills England’s first annual skills report showed FE achievements linked to priority occupations fell by 7 per cent between 2021-22 and 2023-24, from 48,000 to 45,000.

Over the same period, apprenticeships in those sectors rose by 27 per cent and higher education achievements by 14 per cent. Meanwhile, the rollout of skills bootcamps now means the short courses account for 18,000 learner achievements in priority sectors.

New individual sector assessments show mismatches between recent training trends and the volumes or qualification levels needed in some priority sectors.

The priority sectors are advanced manufacturing, clean energy, construction, creative industries, defence, digital and technologies, financial services, health and adult social care, life sciences and professional and business services.

Skills England forecasts that priority occupations in those sectors will grow by almost a quarter, creating 1.8 million extra jobs by 2035.

Analysis of the training pipelines into the priority sectors showed the fastest growth was in skills bootcamps, followed by apprenticeships and higher education.

Its annual report said growing course numbers in construction and digital, for example, “suggests that parts of the education and training pipeline are expanding”, but added that course growth by itself was “not a guarantee” that job-ready learners with applied capability and work-based experience would meet demand for specific priority roles.

Level crossing

Skills England’s ten sector needs reports reveal a growing mismatch between recent training trends and the volumes and qualification levels its analysis indicates the economy requires.

Across all priority sectors, 62 per cent of new jobs are expected to require training at level 4 and above and 38 per cent at level 2 or 3.

Adult social care, clean energy and construction are the only sectors where most demand is at levels 2 or 3.

In adult social care, Skills England said 79 per cent of projected new jobs will require level 2 or 3 qualifications and estimated the sector will need 281,300 extra workers by 2035. That is on top of 404,000 workers leaving roles that will need to be replaced.

Recent growth in training is at higher levels, where Skills England said there will be less demand.

FE achievements at levels 2 and 3 dropped by 24 per cent between 2021-22 and 2023-24, and levels 2 and 3 apprenticeship achievements only rose by only 1 per cent.

But nursing and health and social care apprenticeships at levels 4 and 5 grew by 54 per cent and 20 per cent respectively.

Meanwhile, adult social care bootcamps boomed from 240 to 700.

Training in the construction pipeline however appears to be more aligned. Skills England said 59 per cent of projected additional jobs will require level 2 or 3 qualifications.

Its analysis of recent training trends showed building and construction apprenticeships were up 60 per cent, and reported bootcamp outcomes rose from 100 in 2021-22 to 5,000 in 2023-24.

But growth in levels 2 and 3 further education courses only grew by 7 per cent over that period.

Higher power

In other priority sectors, demand is overwhelmingly at higher level training with training track records to match.

In digital and technologies, defence, life sciences and creative industries, at least 80 per cent of projected new jobs are expected to need qualifications at levels 4 and above.

Skills England’s analysis of those sectors’ training needs showed existing provision is already dominated by higher education and higher-level apprenticeships.

The annual report said higher education accounted for more than two-thirds of learners entering priority occupations, while level 2 and 3 routes through apprenticeships or further education accounted for just over a quarter.

It also said further education had lower overall alignment with priority occupations, with 29 per cent of employed recent FE leavers entering priority occupations, compared with 54 per cent from higher education and 48 per cent from apprenticeships.

Built by bootcamps

The clearest example of bootcamps driving growth was in construction, where ministers are relying on the skills system to deliver their policies on housebuilding, clean energy and infrastructure.

Skills England said construction-related course growth was “partly due to the rise in the number of skills bootcamps”.

Construction courses linked to priority occupations grew by 25 per cent between 2021-22 and 2023-24, but that fell to 16 per cent when bootcamps are excluded.

Skills England’s construction skills needs assessment warned the sector faced the largest projected increase in workers of all the priority sectors.

It projected 493,000 extra workers across 30 occupations would be needed by 2035, largely driven by the government’s target to build 1.5 million new homes in this parliament. A further 595,000 workers are expected to leave the sector and need replacing, bringing the total demand for workers to over one million.

Data warning

A technical annex published alongside the annual report and ten skills needs assessments highlighted that available skills bootcamp data is limited.

Unlike FE, HE and apprenticeships, there is no verified “achievement” data for skills bootcamps. Skills England instead used the third and final funding milestone for “outcomes” as a proxy for “evidence of a successful outcome”.

It also said all bootcamps mapped to priority occupations were selected through “expert judgment-based analysis” by DfE’s skills bootcamps team.

Skills bootcamps have faced repeated questions over outcomes and quality over the years.

FE Week reported last year that almost two-thirds of learners in 2022-23 did not secure a job or progress at work after their course, despite starts more than doubling.

Earlier government-commissioned research found more than half of learners on wave two bootcamps already held a level 4 qualification or above, while some participants received “inappropriate” interviews despite guaranteed job interviews being a core part of the programme.

The technical annex also warned against treating the sector forecasts as directly comparable.

Methods used to select priority occupations and project future demand were chosen by sponsoring government departments, with “support from Skills England analysts where required”.

As a result, the annex said methods “differ by sector”.

Across the sector assessments, Skills England repeatedly said projections should be treated as indicative trends and orders of magnitude, not precise forecasts.